By: Dianne Crocker, LightBox research director and Alan Agadoni, senior VP, LightBox EDR

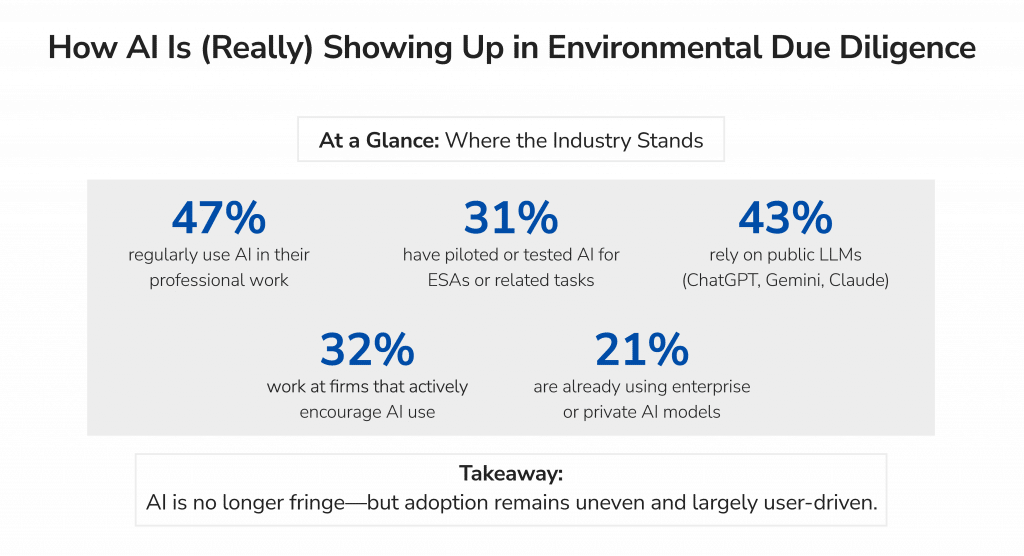

In the March issue of EM Magazine, Alan Agadoni and Dianne Crocker examine how environmental professionals are approaching artificial intelligence in due diligence workflows. Drawing on findings from the LightBox 2026 AI Benchmark Survey, which gathered responses from environmental professionals across the U.S., the article portrays an industry cautiously testing AI integration to improve quality and efficiency. The survey reflected a wide range of experience, including the use of public general-purpose AI tools, private enterprise deployments of large language models (LLMs), and purpose-built AI applications, all while organizations work to establish clear guidelines for confidentiality and work product quality.

One of the most striking findings of the AI Benchmark Survey is how polarized the industry remains. Respondents were fairly evenly split between those who see AI as a powerful productivity tool and those who have serious concerns about accuracy, privacy, and the potential erosion of professional judgment. The survey also highlighted a gap between usage and oversight. While a relatively high percentage of environmental professionals are using AI, many also acknowledge that formal training, governance, and internal guidance have not kept pace with adoption.

Privacy and Security Concerns

Nearly half (43%) of respondents are relying on public versions of foundation models like ChatGPT, Gemini, and Claude while 21% report using private enterprise deployments. When asked about their top concerns surrounding AI, environmental consultants cited output accuracy and reliability (83%), followed by legal liability and accountability (61%), and data security and privacy (46%). These concerns likely reflect how AI tools are being deployed at this early stage of experimentation and adoption. Public, shared AI applications can increase these risks, particularly when sensitive project data or client information is involved. By contrast, licensed enterprise versions of AI platforms and integrated, purpose-built solutions can provide stronger safeguards around data privacy, security, and confidentiality.

As trusted data providers and technology partners increasingly integrate AI into professional workflows, broader adoption of enterprise-grade deployments may help address many of the concerns currently slowing wider use of AI solutions across the environmental consulting industry.

Governance Gaps and Interpretation Risk

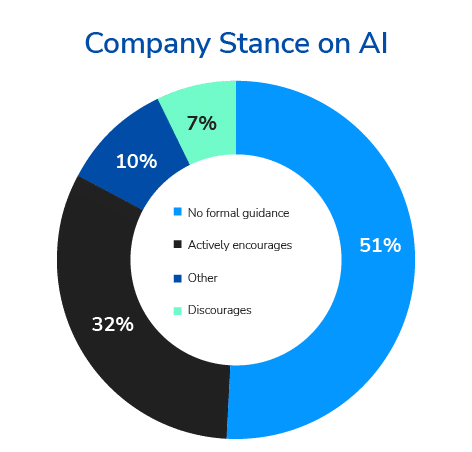

Another interesting finding that the survey results brought to the surface is the gap between consultants’ experimentation with AI and organizational readiness to manage its responsible use. Almost half (47%) of respondents report using general purpose AI tools in their professional work, most commonly for research, data review, and document summarization. Yet formal guardrails remain limited at this time: more than half of firms (51%) report having no specific guidance, and fewer than one in five have established formal guidelines. One respondent summed up the hesitation bluntly: “We’re taking a wait and see approach as there are just too many legal issues related to confidentiality and liability.”

Source: LightBox AI Benchmark Survey Report of Environmental Professionals

Training is even scarcer. More than three-quarters of respondents report receiving no AI training, and formal validation of AI outputs is rare.

Several survey respondents described situations where clients or downstream stakeholders ran Phase I ESA report findings through general purpose AI models and reached inaccurate conclusions. In one example, a consultant discovered that a party reviewing a report had used ChatGPT to summarize the findings and concluded there were “no problems,” despite clearly documented environmental conditions in the original analysis.

Experiences like this reinforce why environmental professionals remain cautious. Without governance frameworks in place, AI use becomes an individual judgment call in a liability-sensitive field. Early results suggest this is a risky proposition at best.

AI at the Margins, Humans at the Center

The environmental due diligence sector is an interpretive discipline that relies on context, regulatory knowledge, and professional judgment. For now, AI remains an assistant rather than a decision-maker, helping professionals process information while leaving interpretation and accountability firmly in human hands.

As governance frameworks, training, and validation standards evolve, the tenants of responsible, ethical, and safe AI use will become clearer across environmental due diligence workflows. Organizations that make good choices while developing defensible practices will be better positioned to integrate evolving AI tools without compromising professional standards.

In their full article, Agadoni and Crocker explore these findings in greater depth, including where AI is already being used in environmental consulting to assist labor-intensive work, the risks of AI-driven interpretation of environmental reports, and the differing visions for AI’s future role in due diligence.

Read the article, packed with additional AI insights, “AI Comes to Environmental Due Diligence—Carefully, Cautiously, and on Human Terms” in the March issue of EM Magazine.